Generating AI Appointment Triage & At-Risk Detection Applications at Mental Health Clinics

Exploring how AI-supported triage can reduce clinician burden while preserving trust in mental health intake, resulting in a framework for ethical deployment across campus services.

COMPLETED:

May 2024

SKILLS:

Literature Review

Problem Framing

UX Interviews

IMPACT

delivered research-backed proposal to stakeholders

conducted 15+ interviews & consolidated 50+ survey responses

THE "MESS"

Mental Health Clinics are overburdened with therapy requests. How might we use AI to streamline the patient care process while ensuring trust & safety amongst patients?

Mental Health Clinics receive appointment intake in several unstructured forms, requiring clinics to manually go through the requests to manage scheduling and miss early engagement of at-risk patients. Phone-based screening creates backlogs, and limited therapist availability means prioritization is critical but difficult.

Because this was a proposal for Machani Robotics' CEO, success meant delivering a research-backed recommendation for AI humanoid integration at UCSD mental health services. Rather than building a functional prototype, we validated three key assumptions: student comfort with AI in mental health, barriers to existing care, and whether humanoid interaction reduced hesitation.

To Do:

Preserve patient trust during triage by identifying concerns about AI integration

Reduce clinician burden during intake with AI-Assisted Appointment Triage

Identify at-risk cases earlier without over-automation

KEY INSIGHTS & DESIGN IMPLICATIONS

Research Outcomes Guided Decisions on How to Use AI

Students were open to AI assistance when it supported human judgment rather than replaced it.

68% of students were comfortable with AI-assisted (not autonomous) triage"

Initial discomfort with a humanoid form decreased significantly after direct interaction.

Positive sentiment increased from 36% to 72% after direct interaction with RIA.

Follow-up conversations mattered more than one-time triage accuracy.

Students defaulted to therapy due to limited knowledge of alternative CAPS resources.

SOLUTION OVERVIEW

Defining AI Applications & Strategy for

Clinic Integration

As revealed through patient interviews, hesitations about the presence of AI required an additional strategic component to our demo: the introduction & integration process of the AI application.

Implement AI humanoid as a resource directory

RIA can reduce the demand for therapy by directing students to the several Counseling and Psychological Services that students are not informed about. Instead of perusing misleading web pages, abandonment can be reduced through custom recommendations & feedback collection.

SUPPORTING RESEARCH:

Based on interviews, students know very little of CAPS resources other than therapy; this potentially results in increased demand for therapy based on not just need but also a lack of awareness for other options.

Develop a Patient-Centered Mobile Application to Continue Conversations

Patients can access RIA remotely through a mobile application. After in person or virtual interactions, the app enables the continuation of check-ups through app avatar

SUPPORTING RESEARCH:

As an embodied humanoid, RIA is primarily accessed in person. However, interviewees identified continued conversations as an important feature & with more trust, RIA can better identify at-risk patients.

Dual Risk Notification System

RIA will flag at-risk conversations for nearby & available staff to review. Implementation will still stress having human interaction available.

SUPPORTING RESEARCH:

In general, students reported feeling most comfortable with AI-assisted identification as opposed to total AI evaluation or total human identification because it reduces human bias while creating room for nuance.

INTEGRATION STRATEGY

Increase AI Humanoid Exposure & Interaction Opportunities

To assuage fears about RIA being a humanoid, students will have the opportunity to engage with RIA through in-person office hours held at UCSD’s Entrepreneurship Center & through workshops where RIA is used in demonstrations. As demonstrated in Office Hours, students had a more positive impression of the AI integration once they were able to use the tool.

RESEARCH PROCESS

Gathering a Holistic View of Problem Space to Inform Specific AI Use Cases.

My process on gathering information followed the following sequence:

Expert Consult

We sat down with Dr. Savita Bhakta, Director of College Mental Health at UC San Diego to understand the problem space from the staff’s point of view.

Literature Review

We looked online to examine current research on the benefits & limitations of AI-powered humanoids in order to inform our prototype ideas.

Digital Survey

We created a google form to receive quantified inputs on anticipated comfort levels with a humanoid & explore the problem space.

Semi-Structured Interviews

I interviewed people who, on our digital survey, indicated they were open to discussing more about their thoughts. These interviews were also used to illustrate our product ideas & receive feedback.

Controlled Observations

I organized an "office hours" with our AI-humanoid that allowed people to ask Ria questions.I had them answer the same set of questions regarding comfort levels before & after the interaction to inform whether a lack of humanoid familiarity was a part of the problem.

LITERATURE REVIEW

Determining Previously-Established Strengths of AI & Humanoids in Healthcare

Consistently Empathetic Responses to Patient Needs

A panel of licensed health care professionals preferred ChatGPT's responses 79% of the time and rated ChatGPT's responses as higher quality and more empathetic.

A Design to Invite Trust and Conversation

RIA has a “clearly non-human appearance” which was found to be liked more than robots that try to appear humanlike.

Extended Conversation Memory

RIA has the capacity to remember elements from a conversation for a more smooth flow; Thinking big picture this can help quickly identify risks related to family history, past experiences, etc.

EXPERT CONSULTATION

Examining Project Context with Dr. Savita Bhakta, Director of College Mental Health at UC San Diego.

At-risk patients are identified via a phone call screening. There is a backlog of appointments due to lack of knowledge of where to call and limited therapist availability. Currently, therapy is the most demanded treatment by UCSD students.

RIA would ideally help case workers identify at-risk patients prior to human verification or serve as an alternative aid for those who are not at-risk.

Defining "At Risk"

High Risk: higher impact, like death, suicide attempt, low stress tolerance and unable to regulate themselves

Low Risk: includes changes in original behavior, unable to sleep, general disinterest

It is important to immediately flag high-risk behavior.

SURVEY

Gathering Survey Responses to Broadly Examine Student Sentiments Towards AI Integration

Median Age: 20 years (ranging from 18 - 25 with one outlier at 31)

Sex Distribution: 65% Female, 30% Male

International Students: 45.5%

CAPS Familiarity: 60% have used CAPS at UCSD, 86.7% of our sample knew of CAPS

Biggest Barrier to Care was Limited Availability of Counseling

In a multi-select question, most of our surveyed students selected a limited availability of counseling to be the biggest barrier to them receiving mental health support at UC San Diego. This was followed by a lack of awareness about available resources and long wait times.

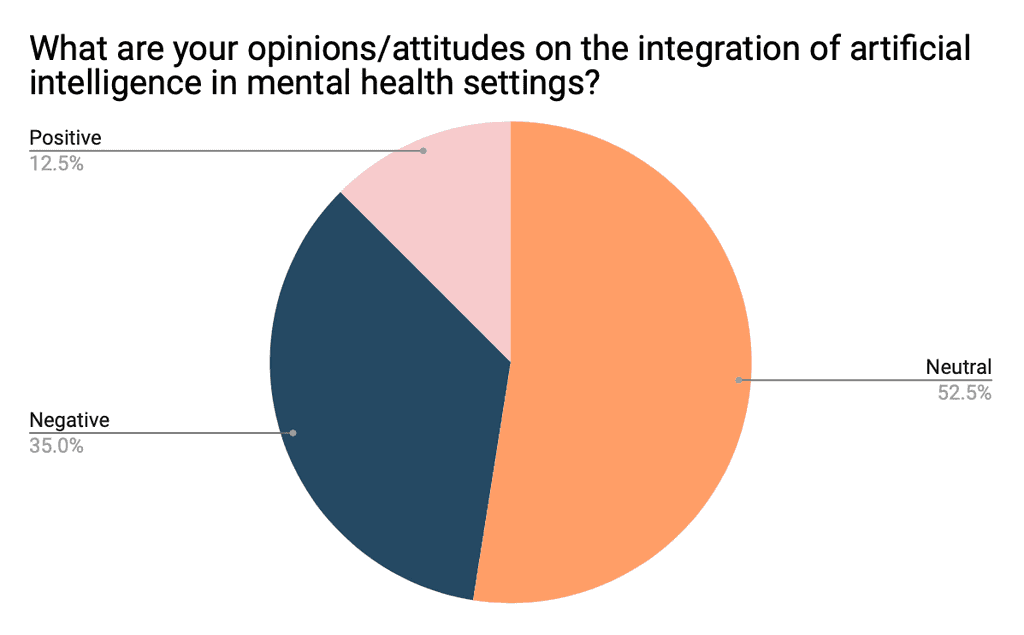

Attitudes towards integrating AI into Mental Health are split between neutral and negative

14/21 of the students who said "neutral" cited a lack of personal experience & understanding as the reason they selected that option, so in our next phase of research we provided more information on how AI might be used.

"could be helpful to have an instant response on a situation and get better clarification"

"I like the idea that AI could offer less biased feedback and draw from a larger base of knowledge"

"I feel what makes therapy so effective is the human connection..."

There is a widespread hesitation towards humanoids in mental health…

Overall, there were many negative attitudes towards the idea of humanoid robots entering the mental health space. Some of the reasons:

"Since there's little known about the program of AI and robotics, I don't trust them with my information (black box theory)"

"If I was presented with this I would feel sad that no real person took the time to try and talk to me"

…however, the distribution leans more positive among international students

Many international students cited "language barriers" as a reason they might interact with a humanoid robot over a person in a mental health setting.

"[A humanoid can] address our issues while taking our social context into consideration"

SEMI-STRUCTURED INTERVIEW

Inviting Survey Participates to Elaborate on Responses and Provide Feedback on Initial Use Cases.

BARRIERS TO ACCESSING RESOURCES

Scheduling delays was cited as one of the biggest barriers to access on-campus resources for mental health, along with lack of awareness and lack of accessibility.

FAMILIARITY WITH EXISTING CAPS RESOURCES

Non-counseling services (including workshops, well-being forums, & behavioral health services) from CAPS were generally unfamiliar to students.

COMFORT LEVELS WITH RIA

Most interviewees were moderately comfortable with the idea of expressing mental health experiences to RIA (AI humanoid) with comfort ratings increasing when notified that human experts would monitor and handle that data.

TRIAGE USE CASE

Overall slightly positive reception towards answering screening questions through conversation with AI rather than a screening form, emphasizing that remote scheduling feels more comfortable, and clarifying that this depends on the level of crisis.

RESOURCE DIRECTORY USE CASE

Generally positive reception to the implementation of RIA as a “humanoind directory” to human-operated wellness activities, workshops and resources campus.

IDENTIFYING AT-RISK PATIENTS USE CASE

Generally students want transparency about risk-detection. Some are cautious about losing nuance, and some are neutral because of similarities with mandated reporter therapists.

CONTROLLED OBSERVATION

Testing whether meeting RIA might alleviate fears around human-humanoid interaction.

I reached out to the director of UC San Diego's entrepreneurship program to set up an "Office Hours" event where people could come meet RIA and directly ask her questions to meet their curiosities. None of the participants had ever interacted with a humanoid prior to this.

Pre-Interaction Highlights

Participants were split on feeling "very positive" or "very negative" towards the integration of artificial intelligence in mental health settings. The vast majority of them (63.6%) felt neutral.

Quotes

"Sharing personal information with a robot is scary because you don’t know if they’re correctly understanding what you’re telling them."

"…there is potential for positive interactions, but also significant risks regarding privacy and manipulation"

"Sharing mental health experiences is very vulnerable and in my opinion requires human connection"

During & Post-Interaction Highlights

Participants overwhelmingly had positive reactions after meeting RIA; there was a higher percentage of students who marked "Positive" post-interaction. While many were still cautious, they were able to provide more comprehensive feedback on our use case.

Observations

Participants became comfortable with RIA very quickly when they spoke in their native (non-English) language

Participants most often asked questions of moral dilemmas, testing RIAs ability to make moral decisions

Participants were more interested in her knowledge base than her ability to act as a therapist.

REFLECTION

The Importance of Defining the Problem

At the start of the project, we struggled trying to figure out how to tackle a project of such a large scope involving areas that we were not heavily experienced in: humanoids and healthcare. We began with jumping to brainstorming solutions and projects, but later revisited user research as a way to inspire solutions that were realistic and relevant; this was the part where I took a lead!

I also learned to consider the context of the project (in this case, my school) instead of focusing on the product alone, which helped us unravel the current mental health support areas that were not visible to students. It was a big light bulb moment when I discovered exactly where RIA would be helpful.

The most surprising finding was the language barrier insight; it completely reframed who would benefit most from RIA. International students' enthusiasm for multilingual support showed us that AI humanoids could address equity gaps, not just efficiency problems. This taught me that exploratory research often reveals problems more valuable than the ones you set out to solve.